Contact Centre AI Demand Diagnosis: How to Tell Whether Your Implementation Will Reduce Demand or Just Relocate It

- Graeme Colville

- 5 days ago

- 6 min read

Before your operation commits to an AI implementation - before the vendor is engaged, before the use cases are scoped, before the pilot timeline is agreed - one analysis determines whether the deployment will reduce your workload or accelerate it.

That analysis is a contact centre AI demand diagnosis of the contact types being proposed for automation.

It is the step that the vendor sales motion skips.

It is the step that internal project timelines apply pressure to bypass.

And it is the only step that establishes whether the contacts being automated are worth automating.

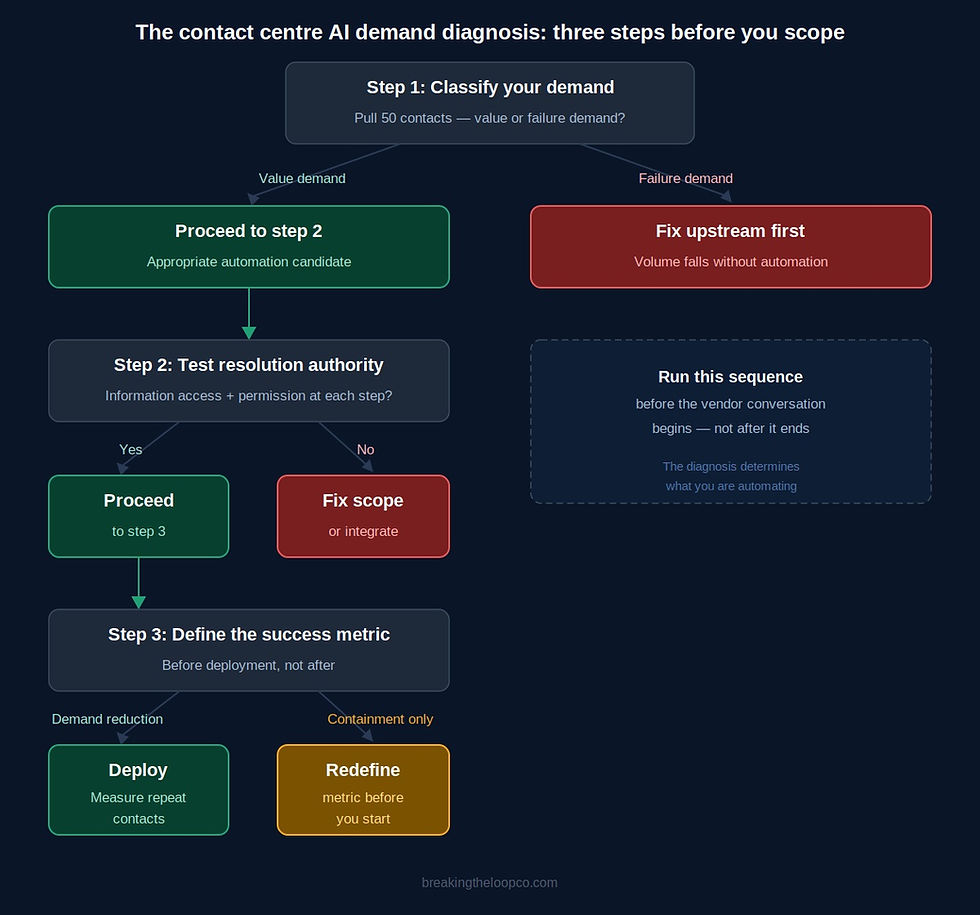

This post sets out the diagnostic framework across three steps.

Running the Contact Centre AI Demand Diagnosis: Three Steps Before You Scope

Step 1: Classify Your Demand

For each contact type being proposed for AI deployment, pull a sample of fifty contacts and classify them into two categories.

Value demand: contacts the customer needs to make and the operation needs to handle.

The customer has a genuine reason to be in contact.

The interaction serves a purpose that cannot be removed by fixing something upstream.

Policy renewals, account queries, legitimate claims notifications - these are value demand contacts. They are appropriate automation candidates.

Failure demand: contacts that exist because something in the system failed to resolve the customer's situation.

The customer is calling back because the previous interaction did not resolve their issue.

They are chasing a commitment that was made but not fulfilled.

They are seeking information the process should have provided proactively.

They are contacting again about the same underlying issue under a different presenting reason.

These are failure demand contacts. Automating them does not remove them. It handles them faster.

The proportion of failure demand in your highest-volume contact types is the single most important number in any AI business case.

If failure demand represents more than twenty percent of the target contact type, automation is not the first intervention.

The structural cause of the failure demand needs to be addressed. The volume will fall as a natural output. What remains is a cleaner automation candidate.

To make this concrete: imagine you are running a contact centre AI demand diagnosis on your highest-volume contact type - claims status enquiries.

You pull fifty contacts and work through each one.

For thirty-two of them, the customer is calling because they have had no update on their claim for a period they consider unreasonable.

They are not calling because the claim is complex or because they need to provide information.

They are calling because the operation has not communicated with them.

Those are failure demand contacts.

The claims process is generating avoidable volume.

Automating those contacts means building a chatbot to handle calls that should not be happening.

For the remaining eighteen, customers are calling to provide additional information requested by the adjudicator, or to confirm receipt of a decision.

Those are value demand contacts - legitimate, appropriate automation candidates.

The analysis takes an afternoon.

The finding changes the business case entirely.

The operation is not automating sixty-four percent of the contact type.

It is automating thirty-six percent, and it needs to fix the claims communication process before the volume case for automation makes sense.

Step 2: Test Resolution in the Automated Journey

For the contact types where demand classification indicates value demand dominates, the next step is to map the automated interaction end-to-end and test whether the system has the authority and information to resolve the contact at each decision point.

This is a design test, not a technology test.

The question is not whether the chatbot can understand the customer's request.

It is whether the system has access to the information needed to answer it, and whether it has the permission to take the action the customer needs.

A chatbot that can accurately identify that a customer wants to know the status of their claim, but cannot access the claims management system to provide that status, cannot resolve the contact.

It can only collect and route - which makes it a triage layer, not a resolution channel. A triage layer with an automated front end is not an efficiency gain.

It is friction added before the same agent interaction that was happening before.

Map the journey. At each decision point, identify two things: does the system have the information? Does the system have the authority?

Where the answer to either question is no, the automated journey will transfer to agent.

The worked example makes the failure mode visible.

A billing query chatbot is being designed to handle payment plan requests.

The journey maps correctly through identification, account verification, and query classification.

At the point where the customer asks to change their payment date, the chatbot hits a system constraint: payment date changes require access to the billing platform, which the chatbot does not have API access to.

The journey ends in transfer. Every payment date change contact - which represents forty percent of the billing query volume - will transfer regardless of how well the chatbot performs at the front end.

This finding, identified in the mapping stage before deployment, changes the scope of the implementation.

Either the API integration is built before go-live, or the payment date change contact type is removed from the automation scope.

Neither outcome is a failure - discovering the constraint before deployment rather than after is exactly what the design test is for.

High transfer rates in the mapped journey before deployment reveal that the automation scope does not match the resolution requirement.

Finding them before deployment costs an afternoon.

Finding them after deployment costs the credibility of the programme.

Step 3: Define the Success Metric Before Deployment

The success metric for an AI implementation must be agreed before deployment begins - not selected from the available data after the fact.

This is where most contact centre AI demand diagnosis frameworks stop short.

They classify demand, they test the journey design, and then they hand over to the implementation team - who arrive at the post-deployment review with containment rate data and declare success.

The structural problem with that sequence is that containment rate is the wrong metric, for reasons the previous posts in this series have established.

A contained contact is not the same as a resolved contact.

An operation that agrees containment rate as its success measure before deployment will find what it is looking for - and miss the signal that matters.

The metric that matters is demand reduction at customer level.

If the total number of contacts from the customer cohort targeted by the automation falls after deployment, the implementation is working.

The contacts that were addressable by automation have been handled, and customers are not returning because their issues were resolved.

Agreeing this in advance is a political act as much as a technical one. Containment rate is the metric vendors report because it is visible immediately after deployment.

Demand reduction at customer level takes weeks to measure - it requires matching post-interaction cohorts against inbound volume data across a defined window.

The pressure to report early success is real, and containment rate is available.

Agreeing before deployment that containment rate alone is insufficient - that the success measure requires demonstration of reduced repeat contacts from the same customer cohort - changes what the post-implementation review finds, and changes the decisions made based on it.

To make this work practically, three commitments are needed before deployment begins.

First, define the cohort: which customers interacted with the automation, and over what period.

Second, define the measurement window: how long after the automated interaction will you track inbound contacts from the same customer. Seven to fourteen days is standard for most contact types.

Third, define the threshold: what reduction in repeat contact rate from the automated cohort constitutes success.

If the cohort is returning at a rate equal to or higher than the non-automated cohort, the automation is not resolving demand.

These commitments need to be part of the vendor contract if possible, and part of the internal governance framework regardless.

The question of whether the AI deployment reduced demand - not whether it contained contacts - is the question that determines whether the implementation succeeded.

Getting that question agreed before deployment is the hardest and most important part of the contact centre AI demand diagnosis.

Why This Sequence Matters

The three steps in sequence - classify demand, test resolution authority, define success metrics before deployment - are not individually complex.

Each one is a half-day exercise for an operations leader who knows their contact types.

Together they change what gets deployed, what it is expected to do, and how success is measured.

The reason this sequence is not standard practice is not that it is difficult. It is that it introduces delay and uncertainty into a project that already has political momentum.

The vendor wants to start the pilot.

The sponsor wants to show progress.

The team wants to get to implementation.

The demand diagnosis sits before all of that, and it might - if the failure demand proportion is high or the resolution authority gaps are significant - conclude that the proposed implementation is premature.

That is not a failure of the diagnostic.

It is the diagnostic doing its job.

An AI implementation that reduces demand is worth doing.

An AI implementation that relocates demand - faster, cheaper, and less visibly - is a liability dressed as a success metric.

The contact centre AI demand diagnosis tells you which one you are building before you build it.

The Find Your Loop diagnostic identifies which structural failure patterns are generating your highest-volume contact types before you scope the deployment - so you know what you are automating before the pilot begins.

Comments